Readability refers to how easy it is to read and understand a text, depending on its unique features. This can be measured using metrics such as the number of syllables in a sentence or variety of words used to calculate a ‘level’ and/or a readability score.

If you’ve already analysed texts using the Text Inspector tool, you’ll have seen that part of our linguistic analysis focuses on readability.

In this blog, we’ll be taking a closer look at why readability is important for all language learners and users, how it’s measured and whether it’s an accurate way of analysing a text.

Why is readability important?

Readability is important because it influences how clearly a text can be understood by the reader.

By analysing the readability of a text, we can make that text as clear as possible and better match it with its audience. It’s useful when we’re creating any kind of text and teaching both native and non-native English speakers.

Teachers

When teachers can measure readability, they can decide whether a particular text is suitable for a student. It can help them decide whether it is suitably challenging without being overwhelming. This is true for both teachers of English as a second language and native language teachers of all subjects.

Businesses

By considering readability, businesses can simplify their documents so they are easier to read. This is especially useful when creating guidance materials such as instruction manuals.

Professionals

Readability strongly influences how well readers engage with online texts such as blog posts, web pages and other marketing materials. When professionals such as writers, editors, medical professionals, lawyers and government officials can reliably measure these metrics, they can tailor their texts accordingly and get better business results.

How is readability scored?

Readability is measured by collecting key metrics relating to the text then using a specific mathematical formula or collection of formulas to calculate.

This started back in 1921 when American, Edward Thorndike published a book called ‘The Teacher’s Word Book.’

His book considered how often so-called ‘difficult words’ were found in literature and was the first of its kind to apply formula to language.

Other researchers soon used this formula and built upon it, considering additional factors such as average number of syllables per word, difficulty of words, frequency of words, length of sentences, variety of language used, and so on.

Then they compared these results to the average grade levels of students and asked them questions to check their comprehension. This was used to develop various readability formulae.

The most famous of these are perhaps the Flesch-Kincaid Grade Level and Flesch Reading Ease formulae, which considers the number of syllables per 100 words and the average number of words per sentence. These are the formulae widely used in software such as Microsoft Word and SEO tool, YOAST.

As time has gone on, linguists have conducted more and more research into readability, and the formulae have become ever more complex. At the time of writing, there are approximately 200 different readability formulae including:

- Flesch Reading Ease Score

- Flesch-Kincaid Grade Level

- Fry Graph Readability Formula

- Gunning Fog Index

- New Dale-Chall Fry Graph

- Powers-Sumner

- Kearl

- SMOG

- FORCAST

- Spache

Are there any problems with readability scores?

Even though these readability scores are extremely useful, they’re certainly not foolproof. Here are a few of the reasons why:

1. They don’t tell us much about the overall quality of the text

There are many other factors that influence how easy a text is to read and understand. It’s not just about readability but also things like grammar, voice and spelling.

As Eileen K. Blau pointed out in her study titled, ‘The Effect of Syntax on Readability for ESL Students in Puerto Rico’, we need to combine these readability stores with other factors to gain a better picture of a text’s difficulty. One of these is syntax (how words are combined to make sentences), “…the effect of syntax on readability challenged the usual sentence length criterion of commonly used readability formulas which deem short sentences easy to read…”

2. They’re less useful for shorter texts

If you’ve ever heard the famous parable called, ‘The Blind Men and the Elephant’, you’ll understand how many mistakes we can make when we only look at a small piece of any picture. (If you haven’t read the story, we highly recommend you do so now.)

This idea works the same when we’re analysing a text. If we only analyse a short text or a small portion of a larger text, we’re only getting a linguistic ‘snapshot’ which may or may not be indicative of an overall whole.

For example, we’re far more likely to see more unique words in a longer text than in a shorter one because of the length. The more space we have to expand our ideas, the greater variety of words we’re going to need to express these ideas.

As linguists, Oakland and Lane shared in their 2004 paper, ‘Language, Reading, and Readability Formulas: Implications for Developing and Adapting Tests’; “[Use of readability formulas] should be confined to paragraphs and longer passages, not items. Readability methods that consider both quantitative and qualitative variables and are performed by seasoned professionals are recommended.”

This limitation isn’t such a problem when using the Text Inspector tool. This is because when calculating formulae such as lexical diversity, the tool takes a predefined section of the text then calculates an average. This makes sure that all analyses are comparable, even when dealing with texts of different lengths.

3. Different readability formula can give us different results

Because the different readability gradings are calculated using different metrics and mathematical formulas, your results are clearly going to vary according to which you choose.

You may even find that when you analyse one text, it receives a score of grade 9 whereas on another it receives a score of grade 7.

That’s why it’s always useful to use several readability formulas together when analysing your text.

4. A lower readability score doesn’t always mean that the text is ‘better’

When creating course materials for English language learners, simplifying texts or even writing for an online platform, we’re often told that a lower ‘grade’ (better readability score) means that the text is better.

But this isn’t always the case. It’s important to remember the needs of your unique audience, along with the natural demands of the text.

For example, if you’re analysing a text that is part of a postgraduate degree in Applied Linguistics, you’d expect a higher grade score than if you were creating ESL learning materials for students at CEFR level A1 or A2.

[If you’re interested in politics, you might like to read this 2017 study, ‘The Readability and Simplicity of Donald Trump’s Language’.]

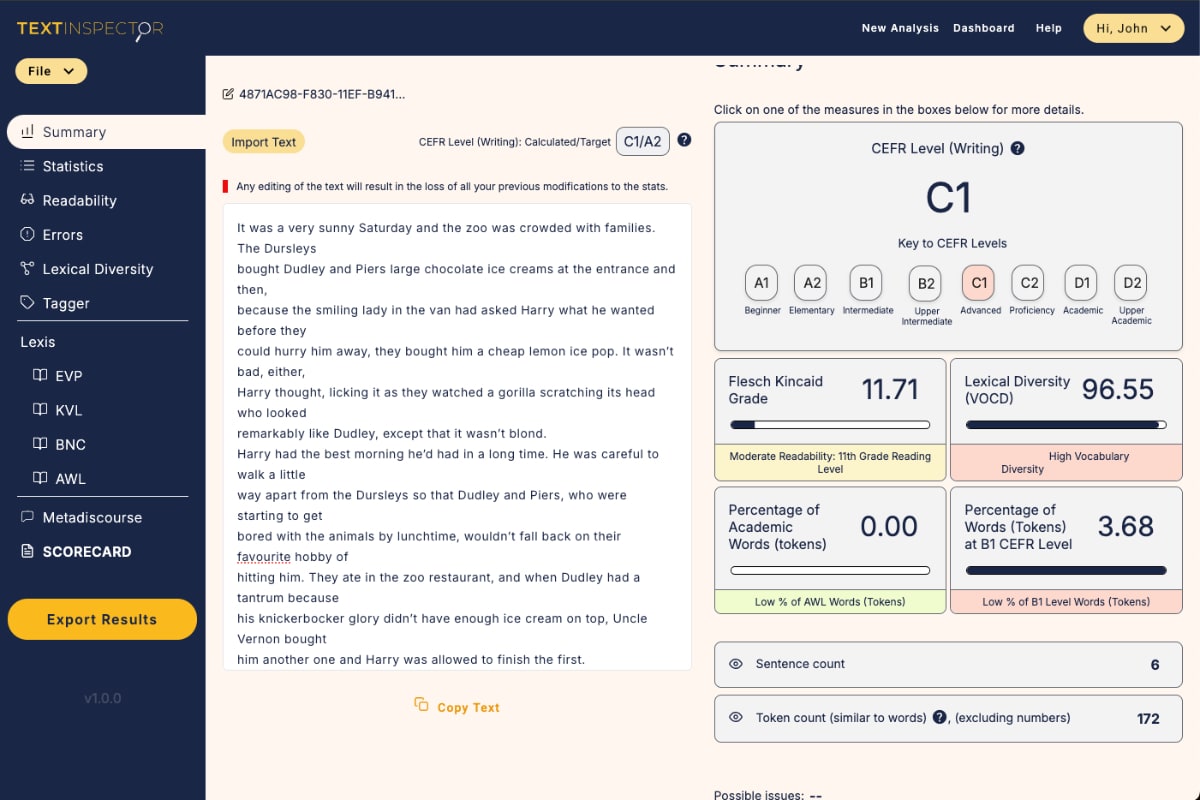

What readability metrics do we use on Text Inspector?

Here at Text Inspector, we use a wide selection of different metrics to provide a broad overview of a text. This allows us to ‘cherry pick’ the best of each to provide a result that is as informative and accurate as possible.

This includes:

- Number of sentences

- Number of words (also known as ‘tokens’)

- Number of unique words (also known as ‘types’)

- Number of syllables (in the entire text)

- Ratio of unique words compared with total words (known as ‘token/type ratio’)

- Average sentence length

- Number of digits in text

- Number of words with more than two syllables

- Percentage of words with more than two syllables

- Average syllables per word, per sentence and per 100 word

When you analyse your text using the Text Inspector software, you’ll gain access to full information regarding each of these metrics. Your text will also be scored in terms of the Flesch Reading Ease, Flesch-Kincaid Grade and Gunning Fog Index.

Summary

By measuring a variety of readability metrics and using readability formulas, we can better understand the language level and complexity of the text in question. This can help us identify and create more effective texts and learning materials.

However, as with any analysis of language, readability isn’t without its limitations. That’s why we should bear these in mind, aim for a more ‘global’ understanding of the text in question and use readability as part of a much larger toolbox that can help us better understand language.

For that reason, Text Inspector does far more than consider only readability and statistics but also takes a wider look at the language used, with a close focus on vocabulary (one of the key indicators of text difficulty.)

By doing this, we can overcome these limitations to provide an in-depth understanding of the language used in any text.

Want to discover the grade level or readability of your text?

Try Text Inspector free here.

Share

Related Posts

BBC news feed demo

24 June, 2022

Text Inspector’s Analysis of Highlights from the BBC News Feed The BBC has long been […]

Read More ->

How to Analyse a Text in English with Text Inspector

23 June, 2022

Whether you’re interested in understanding the structure and complexity of a text, you want to […]

Read More ->

Announcing the New and Improved Text Inspector 2.0!

5 March, 2025

We’re thrilled to announce the upcoming launch of the new and improved Text Inspector! This […]

Read More ->